I finished my first trimester of lessons… I still have one year more... and maybe you are curious to know if was a good or bad decision... and I sincerely say you that yes... it was the best decision I made in the last times. I feel that it was a good decision because I love business intelligence (as a set of small parts) and I found excellent colleagues and excellent teachers.

I am curious to know if the quality of the teachers will be the same in the next trimesters, but I will keep you informed! :-)

As I told you before, in this first trimester I had three classes:

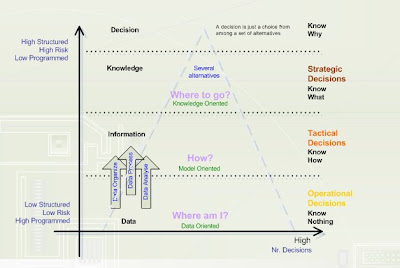

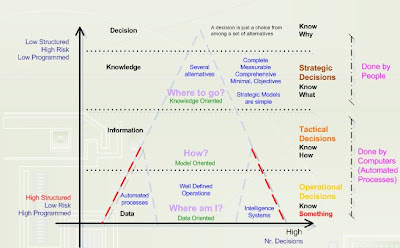

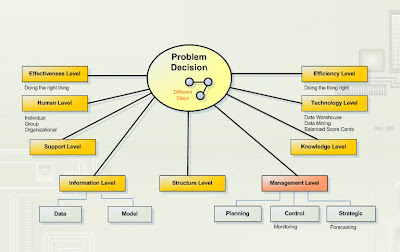

1. Decision Suport Systems

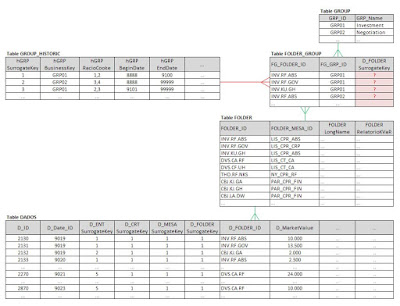

2. Data Warehouse Projects

3. Data Analysis (Statistics)

Sincerely I like the three classes but my main preference goes to the first two! Data analysis I think is not difficult but you need to spend some time to study hard… in contrast, the other two classes you also need to study hard but in my case, is a way to improve my current daily job at my company as BI developer. So, I’m very motivated!